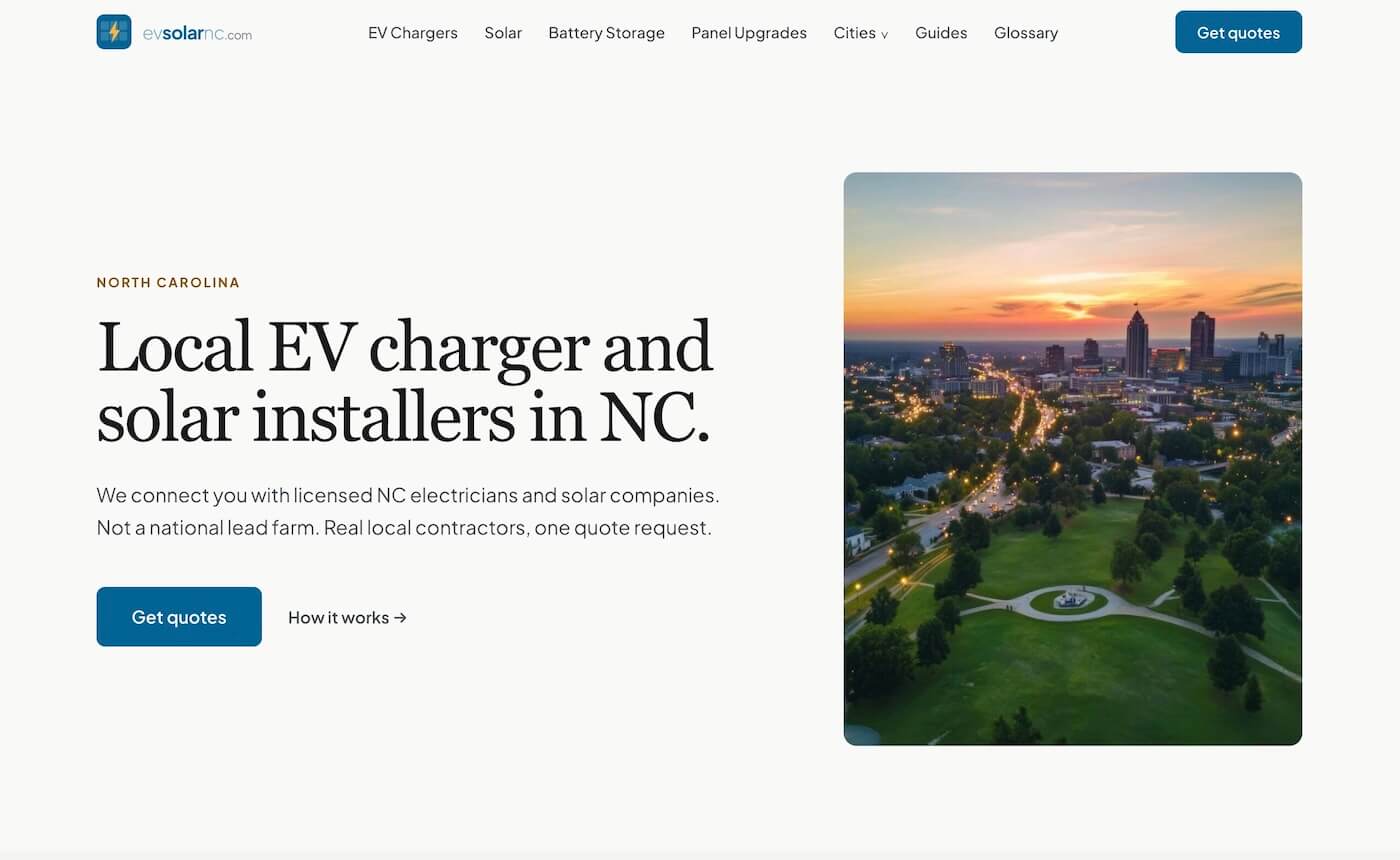

Building a Lead Gen Site for NC Clean Energy Contractors

The second project in my vibe coding series. Same approach as the boulder directory, sharper business problem: a local SEO lead generation site where getting the data wrong could cost a homeowner thousands.

View Live Site →

Why This One

The service area wasn't random. I spent a few years at an agency that built websites for contractors: HVAC, electrical, home services. I understood how that market works, roughly what contractors care about, and where the gaps usually are. EV charger installation and solar felt like the obvious next layer on top of that.

Choosing North Carolina specifically took a bit more thought. I did a market pass across the US looking for regions with real EV adoption growth that weren't already oversaturated with content. California was out. Denver was out. The coverage in those markets is thick and the competition is well-funded. North Carolina hit a different spot: adoption is growing, the IRA brought new installer demand, and there was a clear content gap. Nobody had done this well for NC homeowners yet. That felt like the opening.

The four services: EV charger installation, solar, battery storage, panel upgrades. They're related enough to share infrastructure. A homeowner adding an EV charger often needs a panel upgrade. Someone going solar often wants battery backup. The audience overlap made it worth covering all four from the start rather than picking one and expanding later.

Five Days

The boulder gym directory took less than 24 hours from first prompt to a working MVP. This one was more complex, but not dramatically slower. From first prompt to Raleigh and Charlotte pages being live was around five days. Session limits in Claude Code were probably the main constraint: each session ends, you reload context, you continue. Without that, it would've been faster.

One thing I tried differently this time was using Google's Stitch to generate a UI reference before touching Claude Code. I described the design direction, got a visual, then used screenshots of that as a reference when prompting the actual build. It got me closer to the right aesthetic faster. I'm still not completely happy with the UI, but I made a deliberate call early on: get the content right first. Design can be refined. Publishing wrong incentive figures can't be undone cleanly.

The design direction I landed on was "Warm Authority." Deep blue (#006494) and amber (#FDB336) on an off-white background. DM Serif Display for headings. Fraunces felt too quirky for this audience. People looking for a solar installer want to feel like they're on a trustworthy site, not a boutique design studio.

The Incentive Problem

This is the part of the build I'm most proud of, and also the part that took the most judgment.

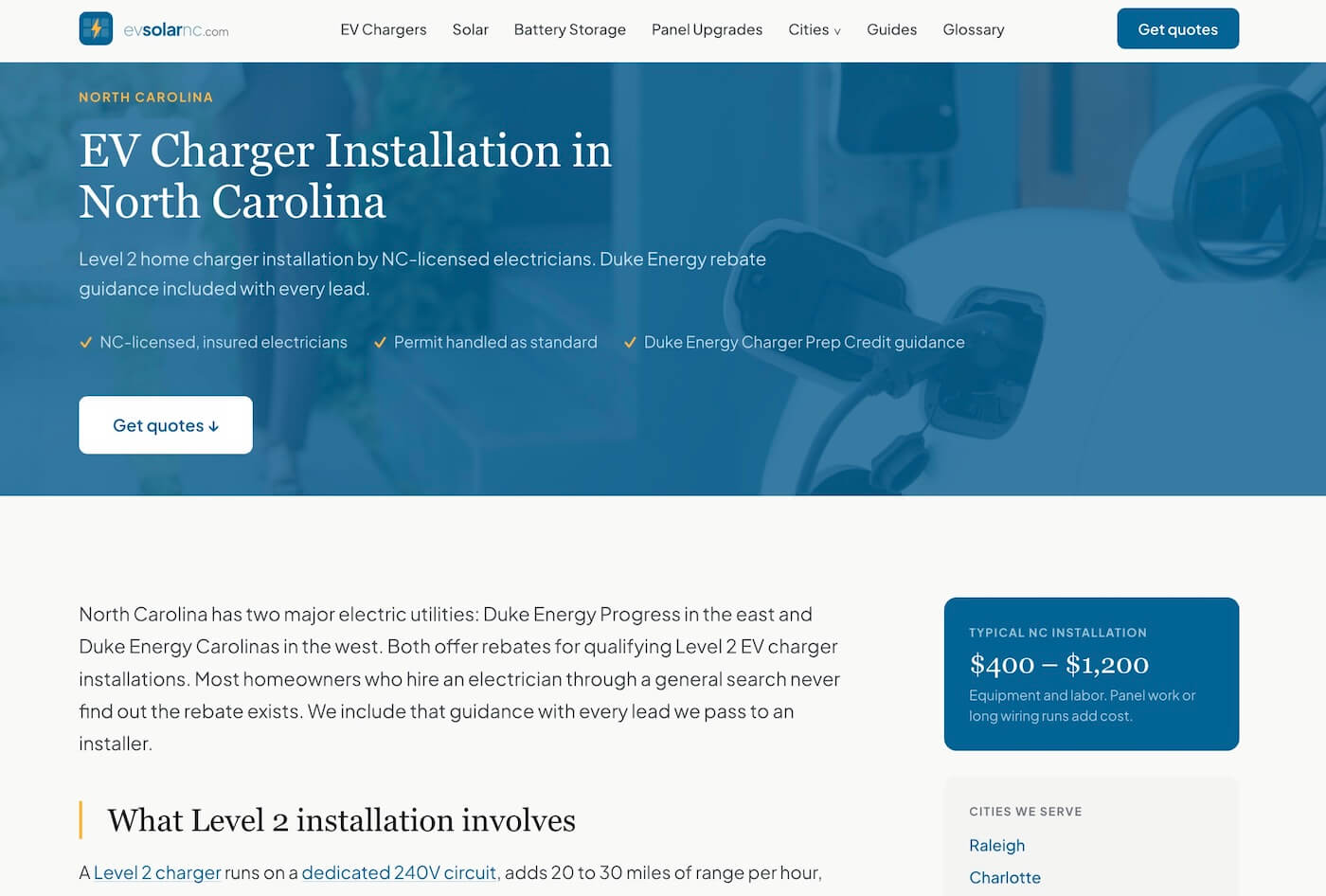

NC clean energy incentives are a mess. Programs expire. They get waitlisted. They have geographic restrictions, utility-specific rules, and funding caps that can run out mid-month. The federal 30% solar credit (Section 25D) expired on December 31, 2025. As of April 2026, I can still find sites telling homeowners it's available. The federal 30C EV charger credit is active, but it's census-tract restricted: most urban NC addresses don't qualify. Most competitors either get this wrong or skip the detail entirely.

Duke Energy's PowerPair program is a good example of why this matters at a local level. Raleigh hit full allocation on April 3, 2026: 0 kW remaining. Charlotte is still open but burning through capacity fast. If that figure is hardcoded somewhere in a markdown file or a template, it stays wrong until someone remembers to update it. Nobody remembers.

The solution was to build incentives.ts as a single source of truth for every incentive figure on the site. Structured fields for status, expiry, geographic restrictions, caveats, source URL, and last-verified date. No figures hardcoded anywhere in content files or templates. Everything pulls from that one file. When something changes, you update it once and it propagates everywhere.

That's an architectural decision, not just an organisational one. It made fact-checking a workflow, not an afterthought. Before any guide went live, I verified every figure against primary sources: Duke Energy's actual program pages, IRS guidance, NC DEQ. Claude's web crawler was useful but not reliable enough to trust on its own for this. The manual oversight wasn't removable. That's fine. On a site where a wrong number could send someone to apply for a program that doesn't exist, the manual check is the right call.

Built to Scale

The content architecture was designed from day one so that adding a new city is a data problem, not a build problem.

City-first URLs, two-tier structure

Every service page lives under its city: /raleigh/ev-charger-installation, /charlotte/solar-installation. This is the right structure for local SEO. It makes the geographic intent explicit in the URL, not buried in a parameter or a subdomain.

Cities are split into two tiers, based on population, growth trajectory, and EV adoption rates. Tier-one cities get full coverage: separate pages for each of the four service categories. Tier-two cities get a single consolidated page covering all services.

Tier one: Raleigh, Charlotte, Lake Norman. Tier two: Greensboro, Wilmington, Wake Forest, Cary, Apex, Holly Springs, Chapel Hill, Huntersville. Eleven cities live, with the architecture already in place to add more.

Content collections with schema

Each city/service page is driven by a frontmatter schema in Astro's content collections: costLow, costHigh, costNote, FAQs, lastVerified, noindex. The templates are already built. A new city means filling in the data. The layout, the structure, the schema markup: none of that needs to be recreated.

The glossary as SEO infrastructure

Twenty-one glossary terms live on the site, each with a prose explanation and a "When you're getting quotes" section that bridges informational intent toward commercial intent. Schema markup: DefinedTerm, BreadcrumbList, FAQPage. These pages won't rank overnight. They're a long-game play: informational queries funnel toward service pages over time. Building them now means they age in while the transactional pages are finding their footing.

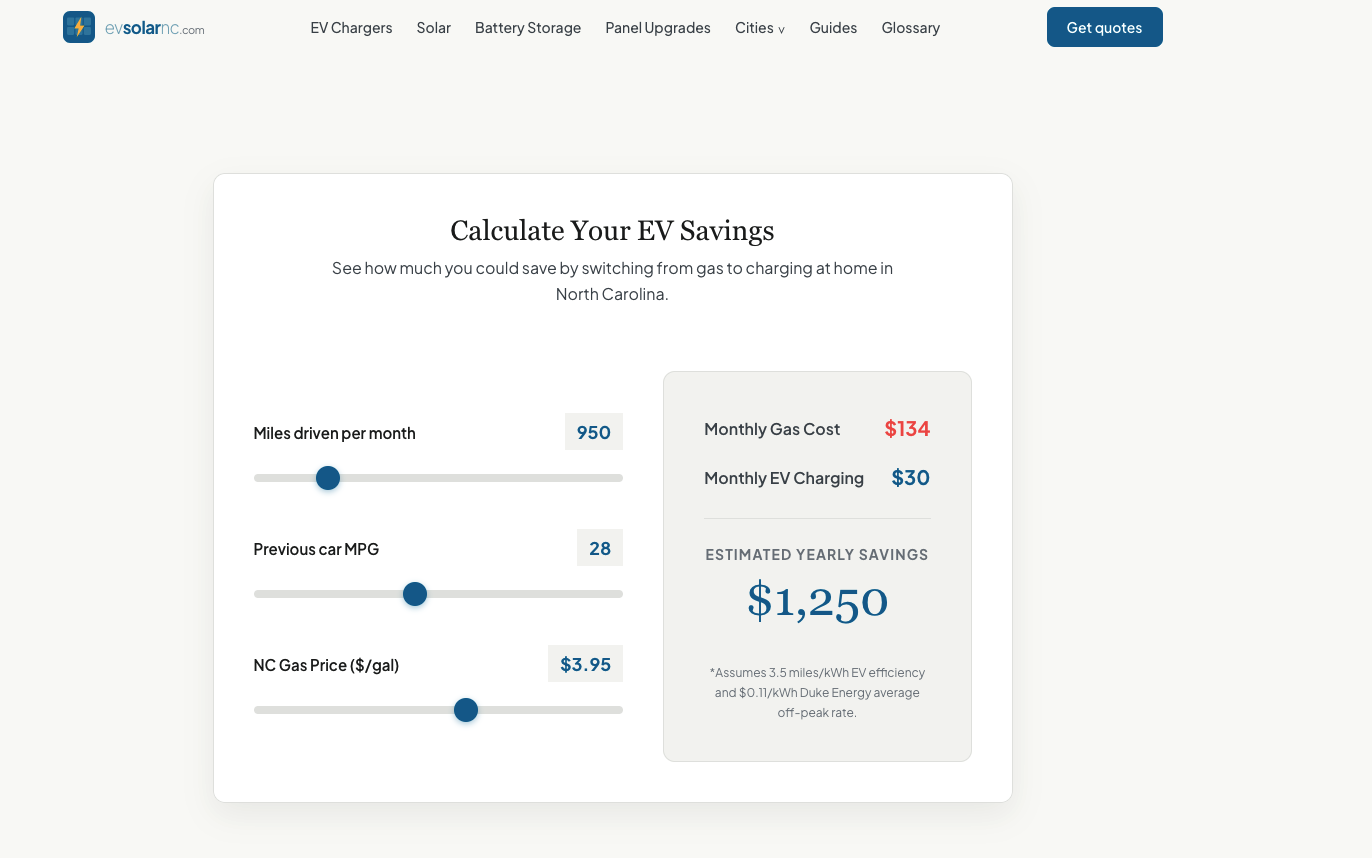

EV savings calculator

A calculator that estimates how much a homeowner saves by switching to an EV, based on their current driving habits and local energy rates. It gives visitors a concrete, personalised number rather than a generic claim, and it keeps people on the page longer. A useful tool is also a more natural entry point to a contact form than a headline.

Two Audiences

A lead gen site needs supply and demand. Most people building these focus entirely on the demand side (homeowners) and treat the supply side (contractors) as an afterthought. I tried not to do that.

The for-contractors page is separate infrastructure. It's noindex: true. It's not trying to rank. It's a cold outreach tool: a clear explanation of the model, an Angi comparison grid, and a contact path. The distinction matters because the message for a contractor is completely different from the message for a homeowner. Conflating the two audiences would weaken both.

Honestly, I haven't found the contractors yet. I made a deliberate call to launch before solving the supply side. The reasoning: I didn't want the search for contractors to block the site from going live, and I wanted real lead volume before pitching the model to anyone. Once leads come in, I'll have something concrete to show. Right now the model is: homeowner submits a form, an email comes in via Resend, and I route it to the right contractor manually. That's the V1. It doesn't scale, but it doesn't need to yet.

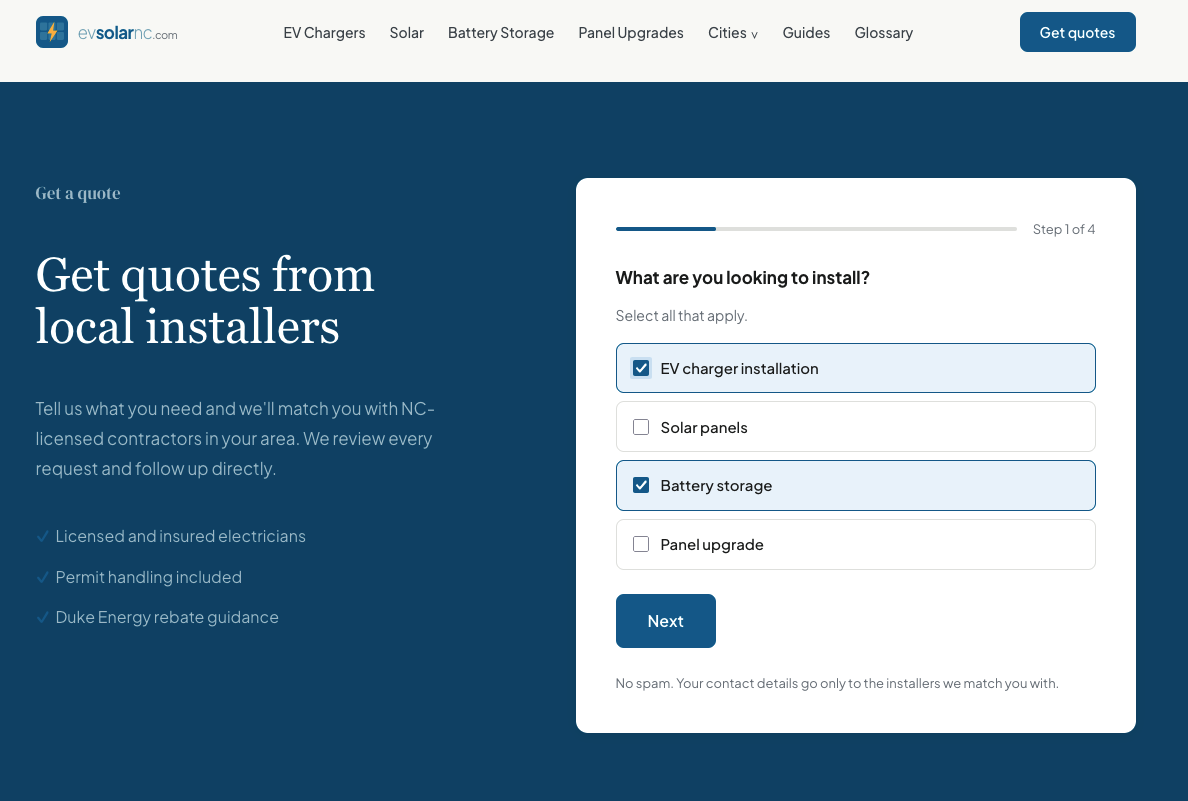

The Lead Form

The contact form is a five-step wizard. Step 2 asks about property type and usage, which is specific to solar and EV charger installs. If a homeowner only selects panel upgrade, step 2 doesn't appear: it's not relevant, and extra steps kill completion rates. That's the kind of conditional logic that isn't glamorous but matters when conversion is the goal.

There's a hidden source_url field on every form submission. It captures which city and service page the lead came from. When you're routing leads manually and eventually want to know which pages are converting, that field is the data you need. I added it from the start rather than having to retrofit it later.

One copy decision worth noting: the CTA says "Get quotes," not "Get a free quote." Contractors sometimes charge for site visits and detailed estimates. Starting the relationship with a technically false promise seemed like a bad way to build trust with either audience.

Where It Got Messy

Session limits

Claude Code has session limits. Each time a session ends, you reload context and continue. It doesn't stop the work, but it adds friction and slows the momentum of a long build. This was probably the biggest structural constraint on speed.

The fact-checking overhead

I can automate a lot of the content generation. I can't fully automate verification. Claude's crawler pulled figures that were outdated or incomplete. Cross-referencing against primary sources: Duke Energy program pages, IRS publications, NC utility documentation, that part was manual and stayed manual. I didn't look for a way around it. In this niche, the cost of a wrong number is too high.

Images

I still pick images manually. AI-generated images didn't feel right for a site about real tradespeople doing real work. I don't fully trust them for this context and I'm not sure visitors would either. It's a slower process, but it's one of those things where the manual judgment call is the right one.

Getting indexed

The site launched and didn't get indexed. Not slow indexing: Google couldn't crawl it properly. The cause turned out to be a redirect loop from inconsistent trailing slash handling, combined with a Vercel deployment configuration issue and an SSL inconsistency that let the same URL resolve differently depending on how you arrived at it. Every path Google tried to follow went somewhere it didn't expect.

Claude couldn't diagnose it. The problem was that each individual setting looked correct in isolation. I'd describe the issue, get confirmation the configuration was fine, add more context, get the same answer. The model had been part of the original build, so it kept working from the same assumptions. Breaking out of that required stepping back: using Gemini to crawl the live deployment and map the actual redirect chains, then switching to Codex to do a second pass over the configuration files. A fresh model with no prior context on the build was what finally found the conflict.

There was a separate images issue too. The Astro build wasn't copying processed images to the correct output directory, so WebP versions weren't being served. That took its own audit.

The site was in limbo for long enough to be genuinely frustrating. The fix itself wasn't complicated once you knew where to look. Finding it required a human deciding the loop wasn't going to resolve itself and changing the approach.

Small bugs

The usual collection: tables not formatted correctly, contrast issues on certain elements, a sticky component that worked on desktop but broke on mobile. None of these were hard to fix, but they all needed a human to notice them first. Claude does a decent job of self-correcting when you point at a problem, but it can't see the screen.

What Actually Worked

The Stitch + Claude Code workflow

Generating a UI reference in Stitch before starting the build gave me a clearer brief to work from. Instead of describing aesthetics in words and hoping Claude interpreted them correctly, I had a visual. Prompting from a screenshot reference produced better first attempts.

Building the machine before you need it

Every structural decision, URL schema, content collections, glossary, schema markup, the incentives architecture, was made before there was any traffic. That's deliberate. SEO is a slow game. The pages that will eventually rank need to exist and age in before they show up in search results. Building the infrastructure first means the compounding can start earlier.

Not overthinking the supply side

Deciding not to find contractors before launch turned out to be the right call. It removed a blocker that could have delayed everything by weeks. V1 doesn't need to scale. It needs to exist and start collecting signal.

Using three models instead of one

By the end of this build I was working across three different AI systems, each for different things. Claude for the build itself. Gemini for live data: crawling the site, surfacing real-time information, checking search volume for keyword decisions. Codex for code review when something needed a second opinion without the existing context getting in the way.

The indexing problem is the clearest example of why that split matters. A tool that's been part of a build from the start is useful for continuity. It becomes a liability when the problem is something that tool helped create. Codex reviewing the same configuration files without any prior context on the project found what weeks of troubleshooting inside the original build hadn't.

Gemini running live crawls and pulling real search volume data alongside Claude Code as the build environment became a natural workflow division. Not everything needs the same tool. Knowing when to switch was as important as knowing how to use any one of them.

What's Next

More cities

The rollout has started beyond Raleigh and Charlotte. Each new city and region is a data entry problem, not a build problem: the architecture is already there. More coverage means more indexed pages, more geographic reach, and a broader surface area for the site to find its footing in search.

Finding contractors

The supply side needs building once there's lead volume to show. The for-contractors page is ready. The pitch is ready. I'm waiting for the demand side to generate something concrete before going out to recruit the supply.

Automating lead routing

Right now it's manual: email comes in, I route it. That works at low volume. At some point it won't. The source_url field already captures what I need to automate the routing. That work comes after the first real leads.

Keeping the incentive data current

incentives.ts is the living part of this site. Programs will change, allocations will run out, new credits will appear. The architecture handles the update problem, but it still needs someone to notice when something changes and make the update. That's an ongoing editorial commitment, not a one-time build task.

The Real Lesson

The boulder gym directory was a methodology test. This one is a business bet. The question isn't whether I can build the site (that's settled), it's whether the site can generate real leads, whether I can build a contractor network around them, and whether the model holds up when it's handling real money for real homeowners.

The incentive data problem turned out to be the most important thing I solved in this build. Not because it was technically hard, but because it forced a design decision that makes the site meaningfully more trustworthy than the alternatives. In a niche where the stakes are high and a lot of the existing content is stale or wrong, being accurate is a competitive position. That's worth building around.

Five days from first prompt to a live site with structured content, schema markup, a working lead form, and city pages for two major NC metros. I'm still figuring out some pieces. But the machine is running. Now I find out if it works.